Big data is everywhere – literally.

The other evening I watched a very interesting BBC Panorama program titled “The Age of Big Data” (note the program can only be viewed in the UK). As readers know, I am not only a big fan of Big Data, I live and breathe it every day. Kred has access to 150 Billion Twitter posts collected over the last 4 years in a 400TB datamine.

The first segment of the program looked at a pilot program between the Los Angeles Police Department and the University of California. The LAPD Foothill division is trialling an algorithm that attempts to predict where in the division a crime will occur while police patrols are in the area.

Professor Jeff Brantingham and the team at UCLA examined 13 million crimes recorded over 80 years and started to predict where crimes might occur in the future. Jeff started his research by attempting to piece together the patterns of human behaviour within the LAPD crime data.

He collaborated with Professor George Mohler from Santa Clara University, who is an expert in pattern-forming systems. What fascinated me about the research was that they started with a mathematical model that was already being used in California to look at earthquake aftershocks.

While there is no mathematical model to predict huge earthquakes such as the 1989 Loma Prieta earthquake that devastated parts of the San Francisco Bay area, earthquake aftershocks can be predicted.

After a large earthquake, there is a high probability that aftershocks will follow nearby in space and time. Mohler developed an algorithm to understand the clustering patterns created by aftershocks.

These same patterns are also found in crime data, and they looked at the “aftershocks of crime”. Mohler explains that “after a crime occurs, there is an elevated risk, and that risk travels to neighbouring regions.” You can download the detailed presentation from Brantingham and Mohler that looks at the whole pilot.

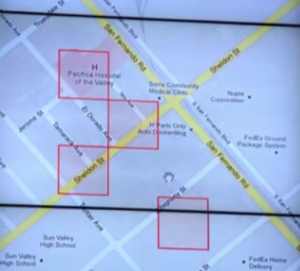

Only when you have a big data set from the last 80 years and 13 million arrests can you really work this algorithm to start to predict the future. In practice, the LAPD patrols were given “mission maps” that showed boxes of 500 square feet that predict where crimes are most likely to occur on their 12 hour watch.

Early results from the LAPD pilot has shown that by using the algorithm, there has been a 12% decrease in property crime and a 26% decrease in burglary in the Foothill precinct – pretty interesting stats. Importantly, the crime prediction model is updated in real-time with new crime data to make it even more accurate.

Using Big Data to predict consumer behaviour

It got me thinking – if big data can be used to not only predict crime – but also convert those convictions into arrests, then the marketing community can use cues from social big data and purchase history big data to predict future purchase patterns.

So using the aftershock analogy, after a purchase event from a particular brand (with an accompanying good purchase experience), there is a high probability that you will purchase again. The extra dimension of data from social means we can start to detect patterns around recommendations and product reviews.

I’m not suggesting that we go as far as linking individual tweets to purchases – as big data is not just about a single tweet that matters, it is more about the patterns we find in the data.

I believe that if individual brands start to harness the power of big social data (and that means becoming a social business), then they can start to pull ahead of their competition.

Angela Ahrendts, former CEO of Burberry was quoted in a Capgemini consulting report recently as saying “Consumer data will be the biggest differentiator in the next two to three years. Whoever unlocks the reams of data and uses it strategically will win.”

Placing a bet on big data is not for the faint-hearted.

Those brands that will lead the big data race have already started though. Watch this space.